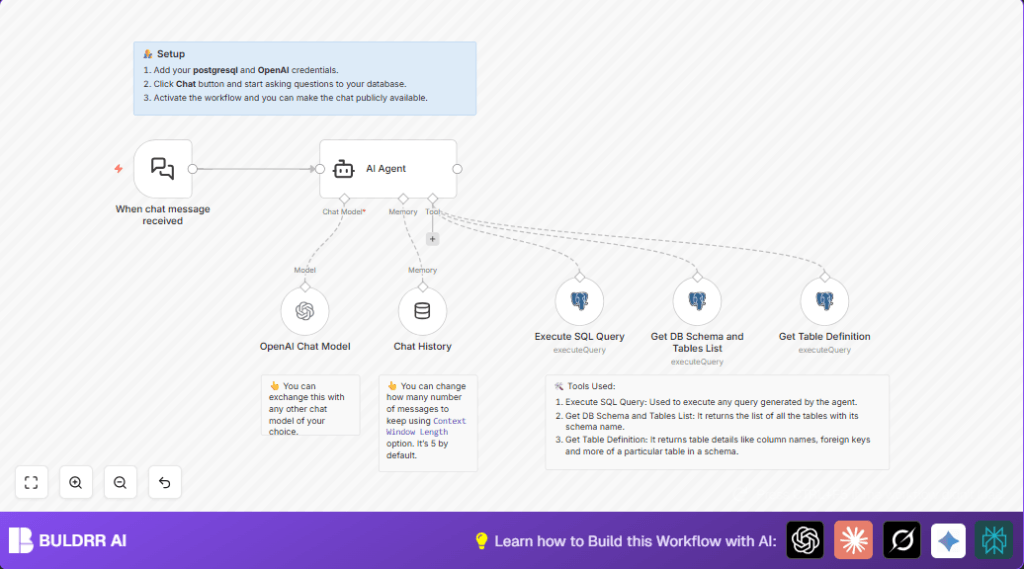

What this workflow does

This workflow lets users chat with a PostgreSQL database using natural language inside n8n. It solves the problem of writing SQL queries manually by automatically generating correct queries from user questions. The result is fast, accurate answers without needing SQL knowledge.

You send a question in plain English. The AI reads the question, finds the right database tables and columns, makes SQL code, runs it, and returns useful data. It keeps conversation history to answer follow-up questions with context.

Who should use this workflow

Anyone who needs data from a PostgreSQL database but does not want to write SQL queries.

This helps data analysts, team members without technical skills, or business users who want quick database answers by chatting.

Tools and services used

- PostgreSQL database: Stores the data to be queried.

- OpenAI GPT-4 model: Processes natural language questions and generates SQL queries.

- n8n automation platform: Runs the chat workflow and manages integrations.

- LangChain AI agent node: Connects AI model with database tools and conversation memory.

- Postgres tool nodes: Get table schemas, table definitions, and run SQL queries.

Workflow input → process → output

Input

A user sends a chat message with a question about the data.

Processing steps

- The When chat message received node listens for the message.

- The AI Agent node reads the question and uses database schema info tools to build a correct SQL query with schema prefixes.

- The AI Agent runs schema and table list queries with Get DB Schema and Tables List node.

- For detailed table info, it uses Get Table Definition node.

- Then the AI Agent sends the SQL query to Execute SQL Query node to get data.

- The Chat History node keeps past interaction context so AI can answer follow-ups.

- The OpenAI Chat Model node runs the GPT model (by default, gpt-4o-mini) to generate human-like responses.

Output

Users get an immediate answer based on data extracted from the database without writing SQL.

Beginner step-by-step: How to use this workflow in n8n

Step 1: Import the workflow

- Download the workflow file using the Download button on this page.

- Open the n8n editor.

- Click “Import from File” and select the downloaded workflow file.

Step 2: Add credentials

- Go to Credentials in n8n settings.

- Enter your PostgreSQL connection details (host, port, user, password, database).

- Enter your OpenAI API key under OpenAI API credentials.

Step 3: Check node settings

- Verify database details in Postgres Tool nodes.

- Check the AI Agent node has the right system message prompt instructing to use schema prefixes in SQL.

- Confirm the OpenAI Chat Model node uses the desired GPT model, by default gpt-4o-mini.

Step 4: Test the workflow

- Use the webhook URL from the When chat message received node to send a sample chat message, for example: “Show total customers by country.”

- Check if you get an immediate, correct answer.

Step 5: Activate the workflow

- Turn on the workflow in n8n by toggling its active status.

- Now the chat interface is ready for use in production.

If interested in security or compliance, consider self-host n8n for this setup.

Customizing the workflow

- Change the OpenAI model in the OpenAI Chat Model node to gpt-4 or gpt-3.5-turbo to balance cost and accuracy.

- Adjust the conversation history length in the Chat History node to keep more or less past messages.

- Edit SQL in the Get DB Schema and Tables List or Get Table Definition nodes to limit schemas or add extra fields.

- Add authentication to the webhook if sharing the chat publicly to secure access.

- Modify the AI Agent’s system message to change answer style, for example to tables or JSON format.

Common errors and fixes

- Error: SQL errors caused by missing schema prefixes

Fix: Edit the AI Agent node prompt to always add schema before table names. Test with simple queries first. - Error: Webhook triggers but no reply

Fix: Check workflow node connections and logs. Verify credentials for OpenAI and Postgres. - Error: OpenAI API rate limit exceeded

Fix: Limit chat usage or switch to less costly models like gpt-3.5-turbo.

Summary of benefits

✓ Easily ask PostgreSQL data questions in plain English and get SQL-based answers.

✓ Save time by skipping manual SQL query writing and debugging.

✓ Help non-technical users get data insights without learning SQL.

✓ Maintain conversation context for follow-up questions.

→ Speed up team decisions with instant database access.