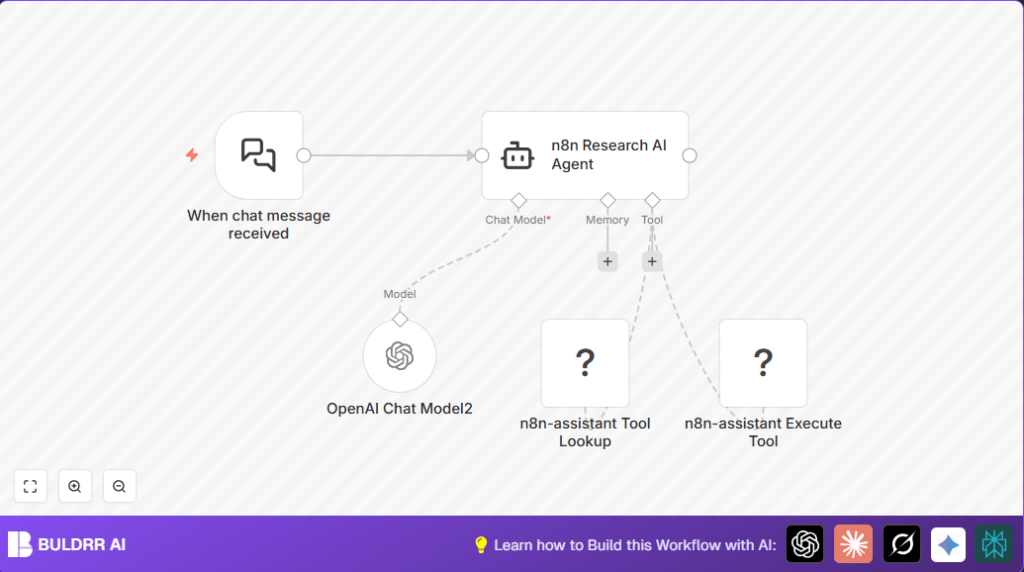

What this workflow does

This workflow helps answer questions about n8n fast. It saves time by using AI to find and share exact answers. It stops repeating the same work and gives clear help right away.

When a chat message asks about n8n features, the workflow quickly checks special tools and info. Then it sends back a clear, useful reply based on what is found.

Tools and services used

- n8n LangChain nodes: For chat triggers and AI agent functions.

- OpenAI GPT-4o-mini model: To understand and generate natural language replies.

- Multi-Channel Platform (MCP) API: To get n8n tools, docs, and run actions.

- Chat interface: Linked to trigger the workflow with user messages.

Inputs → Process → Output

Inputs

- User sends a chat message asking about n8n.

- Credentials for OpenAI API and MCP API.

Process

- Webhook node listens for new chat messages.

- LangChain Agent reads message and decides steps.

- MCP Client Tool Lookup fetches relevant n8n tools and docs.

- OpenAI GPT-4o-mini analyzes data and user question.

- MCP Client Tool Execute runs chosen tools if needed.

- Agent forms tailored, easy-to-understand reply.

Output

- User gets a clear, quick answer about n8n.

- Reduces manual searching and delays.

Who should use this workflow

This is for people managing n8n community chats or support. It fits anyone who answers lots of same questions about n8n features.

The workflow helps users by giving smart, fast answers. It also helps support teams save hours every week.

Beginner step-by-step: How to use this workflow in n8n

Step 1: Import the workflow

- Click the Download button on this page to get the workflow file.

- Open n8n editor.

- Choose Import from File and select the downloaded file.

- This will load the entire workflow into your n8n.

Step 2: Add required credentials

- Open the n8n-assistant Tool Lookup and Execute Tool nodes.

- Enter your Multi-Channel Platform API Key under Credentials.

- Open the OpenAI Chat Model2 node.

- Paste your OpenAI API Key to connect GPT-4o-mini model.

Step 3: Update any settings needed

- If the workflow has IDs, channels, emails, or folders specific to your account, update them.

- If the AI agent has system messages or prompts, check they fit your use.

- You can copy and paste prompt texts from workflow inputs easily.

Step 4: Test the workflow

- Send a chat message to the webhook URL from the Webhook node.

- Watch the response and check if the answer uses the MCP data.

- Check logs to fix any errors.

Step 5: Activate for production

- Turn on the workflow in n8n editor using the toggle switch.

- Monitor live chat traffic and AI replies in real time.

- Update credentials and system messages as needed.

Customization ideas

- Change AI agent system message to focus on specific docs or workflows.

- Switch OpenAI GPT model if want faster or deeper answers.

- Limit MCP tools fetched by tags or categories for narrower results.

- Add a Code node after AI agent to format replies in markdown or HTML.

Troubleshooting common problems

Problem: MCP API authorization fails

Check if your MCP API Key is correct and not expired.

Re-enter credentials in n8n and test with a manual call in n8n-assistant Tool Lookup node.

Problem: AI agent gives no or wrong answers

Make sure the system message is clear and focused on n8n questions.

Retest after improving the prompt to mention MCP tools and docs.

Problem: Webhook trigger does not start

Verify the webhook URL is exactly what external chat sends messages to.

Test webhook with tools like Postman to confirm connectivity.

Pre-production checklist

- Check webhook URL is public and receiving messages.

- Confirm OpenAI API Key works with GPT-4o-mini node.

- Validate MCP API credentials can fetch tools.

- Test dynamic tool run with sample parameters.

- Run full test to ensure AI gives clear replies.

Deployment guide

After testing, enable the workflow to run live by toggling it on.

Keep watching chat replies for correct and quick answers.

Turn on logging for AI and API usage to catch errors early.

Consider using self-host n8n for better control of data and faster workflow runs.

Summary / Results

✓ Saves many hours answering repeated n8n questions.

✓ Gives fast and accurate answers backed by MCP content.

✓ Makes n8n support easier for teams or community managers.

✓ Integrates chat, AI, and tools seamlessly inside n8n.

→ Users get answers instantly without waiting.

→ Support teams focus more on new problems, less on old questions.