What This Automation Does

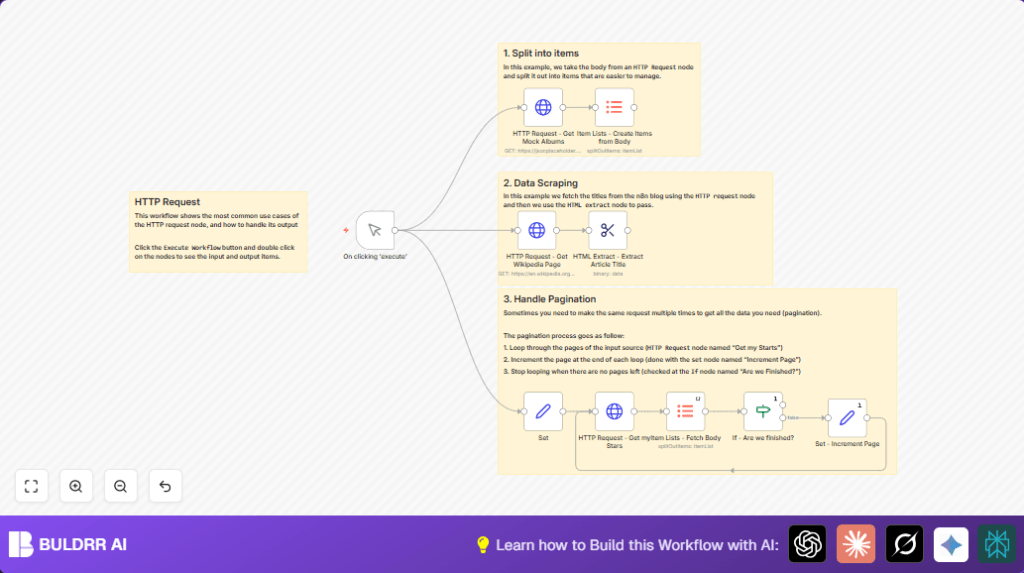

This workflow fetches data from several web services and processes it automatically.

It solves the problem of manual data gathering from APIs and web pages. The main result is getting clean, split, and paginated data ready to use without manual work.

Specifically, the workflow:

- Gets mock album data from a test API and breaks it into items for easy use.

- Downloads a random Wikipedia page and extracts the article title from the HTML using a CSS selector.

- Calls the GitHub API to collect a user’s starred repositories, handling multiple pages automatically.

- Splits large response bodies into single items to simplify later processing.

- Checks if more data pages remain, to loop requests only when needed.

- Runs on manual start, letting you control execution and see results step-by-step.

Who Should Use This Workflow

This is good for people who need to gather and organize data from APIs and HTML sources. It fits developers and beginners wanting to avoid repetitive data copying and parsing.

You do not need deep coding skills but some knowledge of internet data requests helps.

The workflow works best for retrieving lists, handling pagination, and extracting HTML content cleanly.

Tools and Services Used

- jsonplaceholder.typicode.com: Supplies sample JSON album data.

- Wikipedia Random Page URL: Source for fetching article HTML pages.

- GitHub API: Provides user star data with pagination parameters.

Beginner Step-by-Step: How to Use This Workflow in n8n Production

Step 1: Download and Import Workflow

- Click the Download button on this page to get the workflow file.

- Open the n8n editor where you want to use the flow.

- Click “Import from File” to add the downloaded workflow.

Step 2: Configure Basic Settings

- Add necessary API credentials or keys, like a GitHub personal access token if private data is needed.

- Update any IDs, GitHub usernames, emails, or folders in the Set node as required for your data.

- If specific URLs or prompts are part of the workflow inputs, paste them in as instructed.

Step 3: Test the Workflow

- Manually trigger the workflow by clicking “Execute Workflow”.

- Watch each node output to ensure data flows correctly and results look right.

Step 4: Activate for Production

- Once tests pass, enable the workflow to run manually or add scheduling nodes like Cron to automate on intervals.

- Monitor logs in n8n for errors or abnormal stops.

This simple process gets the workflow running for real data collection fast.

For better control and privacy, consider self-host n8n.

Inputs, Processing, and Outputs Explained

Inputs

- Manual trigger to start the workflow.

- API URLs and parameters set in the Set node.

- GitHub username, page number, and items per page.

Processing Steps

- Request mock album JSON and split into items.

- Request random Wikipedia page HTML and extract the title using CSS selectors.

- Use GitHub API to get stars list with pagination parameters.

- Split GitHub star list JSON into individual items.

- Conditionally check if next page exists based on empty data.

- Increment page count to continue pagination if more data is available.

Outputs

- Lists of album items for easy use.

- Plain text article title from Wikipedia page.

- Complete paginated list of GitHub starred repositories.

- All data separated into individual items ready for other workflows or analysis.

Edge Cases and Failures

- Empty or error HTTP responses: Can happen with bad URLs, missing query parameters, or no internet. Verify correct settings and connectivity.

- No data from HTML extraction: Usually due to wrong CSS selectors or HTML structure changes. Update the selector in the extraction node.

- Pagination loops forever: Happens if the empty check is incorrect. Fix the If – Are we finished? node condition so it stops at the right time.

Customization Ideas

- Change GitHub usernames in the Set node to get stars for different users.

- Adjust

perpagenumber to speed up or slow down data retrieval size. - Pick other Wikipedia elements by changing the CSS selector, like paragraphs or infoboxes.

- Add new HTTP request nodes for other APIs and process their results in the same workflow.

Summary and Results

✓ Manual HTTP data fetching is replaced by automated workflow steps.

✓ JSON and HTML data are split into smaller, usable items.

✓ Pagination handled without manual reruns.

✓ Data from multiple different sources is cleaned and ready fast.

→ Results can feed reports, dashboards, or further workflows.

→ Ease of use helps both beginners and pros to save time.