What This Automation Does

This workflow uses real-time search data with AI to answer chat questions correctly. It helps support teams avoid old or wrong replies from chatbots. The output is fast, current answers that save time and make users happy.

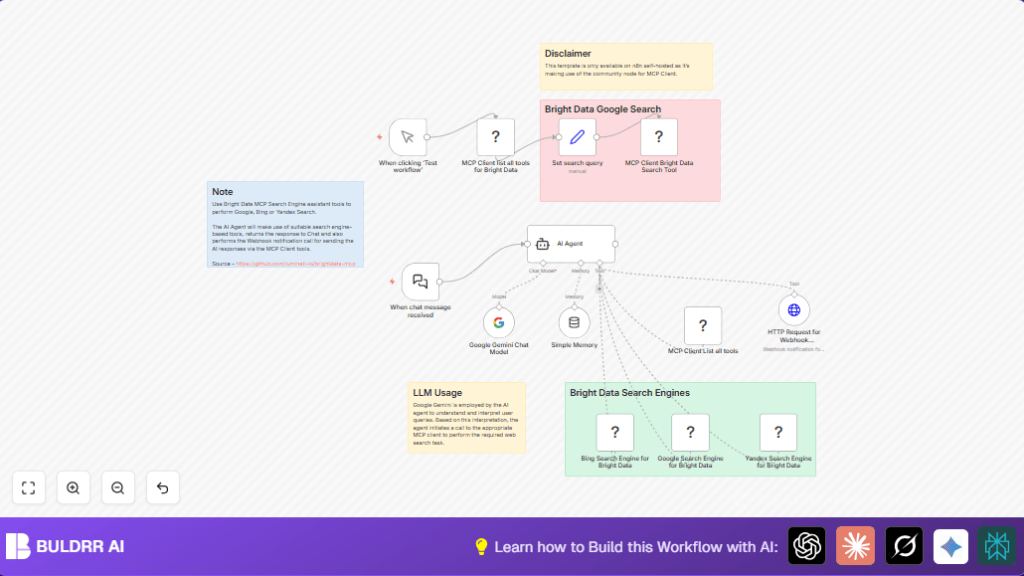

The workflow listens to chat messages, understands them with Google Gemini AI, and finds fresh info from Google, Bing, and Yandex. Then it answers using both AI smarts and real web data. It also sends chat replies to other systems for tracking.

The process keeps chat history so answers fit the conversation. This stops users from needing to wait or check back later. The result is quick, up-to-date, and clear replies every time.

Tools and Services Used

- LangChain Chat Trigger node: Starts workflow when new message comes.

- Google Gemini AI Model (PaLM): Understands and writes chat answers.

- Bright Data MCP Client API: Gets live search results from Google, Bing, Yandex.

- LangChain Simple Memory Buffer node: Saves chat history for context.

- HTTP Request node: Sends AI replies to external webhooks.

How This Workflow Works

Inputs

- Incoming chat messages via the LangChain Chat Trigger node.

- API keys for Google Gemini and Bright Data MCP Client.

Processing Steps

- The AI Agent node reads chat input, checks if a search is needed.

- If yes, the MCP Client node fetches latest search results from Google, Bing, and Yandex.

- Google Gemini AI uses search data and chat context from Simple Memory to generate an answer.

- Answer is sent to a webhook via HTTP Request node for logging or alerting.

Outputs

- Fresh, relevant chat replies with live search info included.

- Webhook notification containing AI chat answer.

- Maintained chat memory for continued conversation.

Who Should Use This Workflow

Support teams needing quick, accurate chat answers with fresh info.

Anyone wanting better chatbot responses without manual data searching.

Users wanting to combine AI understanding with live web searches.

Beginner Step-by-Step: How to Use This Workflow in n8n

Step 1: Import the Workflow

- Download the workflow file using the Download button on this page.

- Go inside your n8n editor (you can use self-host n8n for best community node support).

- Click on “Import from File” and choose the downloaded workflow file.

Step 2: Configure Credentials and IDs

- Add API Credentials for Google Gemini and Bright Data MCP Client under n8n Credentials.

- Update webhook IDs or URLs in the LangChain Chat Trigger node and HTTP Request node if needed.

- Change any email, folder, or table IDs used for logging if your setup requires it.

Step 3: Test and Activate

- Use the Manual Trigger node to run the workflow and check outputs.

- Ensure chat input triggers the workflow and real-time search results appear.

- Fix any errors by reviewing node logs and credentials.

- When ready, switch the workflow to active mode for live use.

- Connect your chat frontend to the LangChain Chat Trigger webhook URL.

Customization Ideas

- Add more search engines or data sources by adding new MCP Client nodes and updating AI Agent instructions.

- Make the search query input dynamic by connecting external inputs to the Set node.

- Change the webhook URL in the HTTP Request node to send notifications to Slack, email, or other systems.

- Adjust memory size in the Simple Memory Buffer node to keep more or fewer past messages.

- Switch Google Gemini models by selecting different model versions in the LM Chat Google Gemini node.

Common Problems and Fixes

Problem: No tools found from MCP Client list all tools node

Cause: Incorrect or expired MCP Client API keys.

Fix: Re-enter valid API keys in Credentials and retest node connection.

Problem: AI Agent does not trigger search tools

Cause: System prompt missing instructions or missing Simple Memory node link.

Fix: Confirm system message includes tool usage instructions and attach memory node properly.

Problem: Webhook notifications empty or fail

Cause: Chat response data not passed correctly to HTTP Request node body.

Fix: Map chat_response output from AI Agent to HTTP Request payload correctly.

Pre-Production Checklist

- Verify MCP Client API credentials are active.

- Test each node separately with sample data.

- Check Google Gemini API works with a test call.

- Confirm chat messages trigger workflow execution.

- Make sure webhook endpoint correctly receives data.

Deployment Guide

Activate the workflow in n8n by toggling it from inactive to active.

Update chat frontends to send messages to the LangChain Chat Trigger webhook URL.

Monitor workflow runs using the n8n’s execution list for errors.

Summary of Benefits

✓ Saves time by automating real-time web searches inside chat replies.

✓ Improves chatbot answers with fresh, relevant info from Google, Bing, Yandex.

✓ Keeps conversation context using memory to give better responses.

✓ Sends chat replies live to external systems for logging or notifications.

→ Supports smarter, faster chat help without manual lookups.