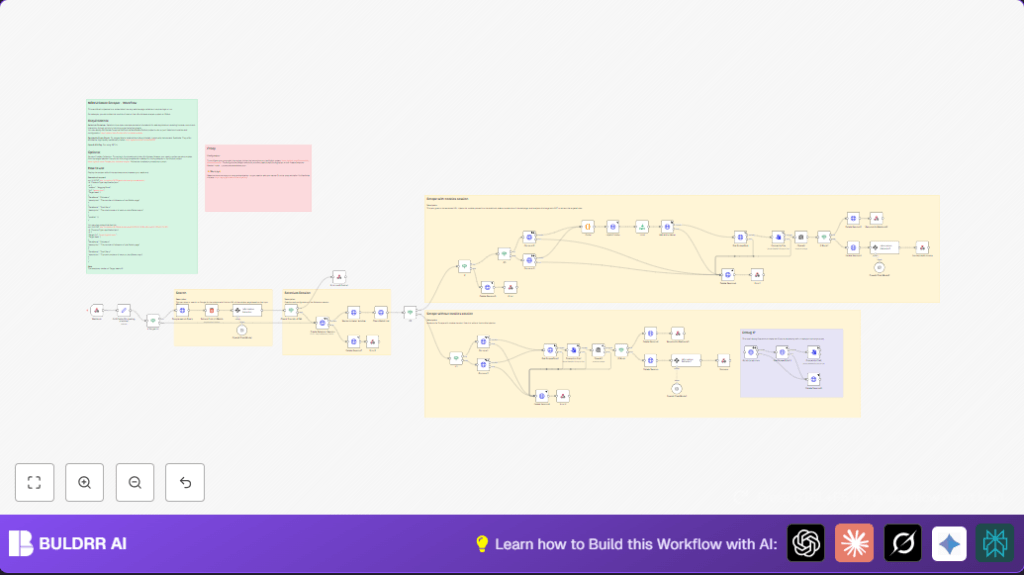

What This Workflow Does

This workflow automates web scraping for pages needing login or protected by anti-bots.

It opens a Selenium browser with proxy support to avoid blocks.

It finds URLs by Google search if no direct URL is given.

It loads authentication cookies when available.

It takes screenshots of pages and uses GPT-4 to read the images and pull requested data.

Finally, it cleans up sessions and sends back structured results.

Who Should Use This Workflow

Anyone needing reliable data from websites that require login or fight scrapers.

Good for users with many target pages for daily monitoring.

Ideal for researchers or marketers wanting faster, repeatable scraping.

Tools and Services Used

- n8n: Automation platform running the workflow.

- Selenium Container: Runs Chrome WebDriver with anti-detection features.

- Residential Proxy Server: Helps avoid IP bans.

- OpenAI API (GPT-4): Analyzes screenshots to extract data.

- Browser Cookie Extension: Optional, to collect valid login cookies.

Inputs, Processing Steps, Output

Inputs

- Webhook receives JSON with subject, URL or domain, target data names, and optional cookies.

Processing Steps

- Checks if URL is given; otherwise does Google search scoped to domain and subject.

- Extracts top matching URL from search result.

- Uses OpenAI GPT-4 to pick best URL for data.

- Creates Selenium session with proxy and anti-bot settings.

- Resizes browser window and cleans WebDriver traces.

- Navigates to target URL.

- Injects authentication cookies if present.

- Takes screenshots and converts them to files.

- Sends screenshots to GPT-4 to extract requested data and detect blocking.

- Processes AI response and prepares output.

- Cleans up Selenium sessions.

Output

- Structured JSON data with requested values or error message if blocked or missing.

Beginner Step-by-Step: How to Use This Workflow in n8n

Download and Import

- Click the Download button on this page to get the workflow file.

- Inside the n8n editor, use the menu to select ‘Import from File’ and upload the workflow.

Configure Credentials and Settings

- Add your OpenAI API Key credential in n8n.

- Fill in proxy settings and Selenium container address in the Create Selenium Session node.

- Update any IDs, emails, or channels if this workflow integrates further.

Provide Input and Test

- Send a test webhook POST request matching the JSON format below.

- Check execution for errors and confirm it returns the structured data.

Activate Workflow

- Turn on the workflow switch to enable it in production.

- Monitor runs and logs for any issues.

- Optionally, consider self-host n8n for more control.

{

"subject": "Hugging Face",

"Url": "github.com",

"Target data": [

{"DataName": "Followers", "description": "Number of followers"},

{"DataName": "Total Stars", "description": "Repo stars total"}

],

"cookies": []

}

Customization Ideas

- Add proxy authentication details inside the Create Selenium Session node.

- Increase or change target data points name and description in the webhook input.

- Tweak window size in the Resize browser window node to simulate mobile or different screens.

- Modify GPT prompts inside Information Extractor nodes for different or deeper info.

- Use browser extension to collect and inject cookies for authenticated scraping.

Handling Errors and Edge Cases

- If cookies domain doesn’t match target URL, injection will error. Confirm domains match before injecting.

- Failure to delete Selenium sessions may cause stale sessions. Ensure Delete Session nodes run on failure.

- Getting ‘BLOCK’ status means site firewall blocked scraping. Try residential proxies and change user agent strings.

- Confirm webhook payload matches expected format to avoid parsing errors.

Pre-Production Checklist

- Verify Selenium Container is up and reachable.

- Your OpenAI API key is active and has enough quota.

- Check webhook input matches JSON template for subject, URL, data points, and cookies.

- Validate proxy server and IP whitelist if proxies are in use.

- Test runs both with and without cookies to confirm session handling.

Result You Get

✓ Saves hours by automating complex scraping

✓ Extracts structured info from logged-in or protected pages

✓ Handles Google search to find right URLs automatically

✓ AI reads screenshots to pull accurate data points

✓ Cleans sessions to keep system stable

Conclusion

This workflow reduces manual scraping time from hours to minutes.

It helps get accurate data behind login walls or anti-bot defenses with AI assistance.

Next steps could include database archiving or dashboarding for ongoing analysis.

Using n8n, Selenium, and OpenAI gives you a way to do tough scraping tasks simply.