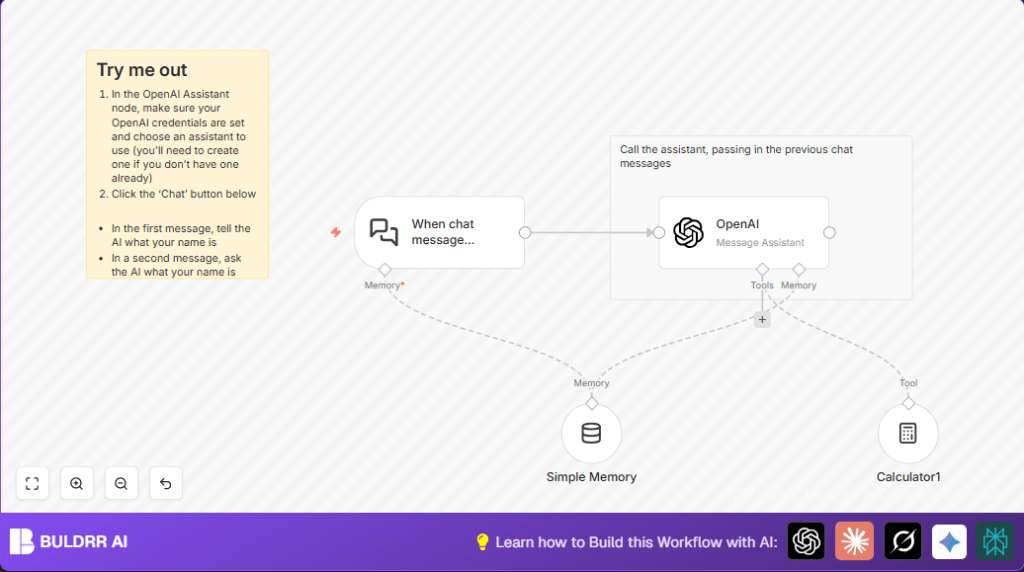

What this workflow does

This workflow manages AI chat conversations that remember past messages and can do calculations when needed. It helps users avoid losing chat context or doing manual switching between AI tools. The workflow takes messages, saves recent chats, runs AI replies with OpenAI, uses a calculator tool inside chats, then sends back smart and relevant answers.

This saves time and stops errors in handling AI chats, making support teams work easier and customers happier.

Tools and services used

- n8n Chat Trigger node: Receives incoming chat messages via a webhook.

- Simple Memory node: Keeps recent chat message history tied to user sessions.

- OpenAI Assistant node: Generates AI chat replies using stored conversation context.

- Calculator node: Performs math or logic calculations inside the chat when needed.

- Sticky Note nodes: Adds reminders and instructions inside the workflow editor.

Who should use this workflow

This automation is for support team leads or anyone managing many AI chat talks needing remembered history and smart tools inside chats. It fits teams wanting to save hours spent on juggling chat contexts and manual AI processing.

People new to n8n but already familiar with basic workflow import and configuration can use this flow to get faster, accurate AI responses in their systems.

How the workflow works (Inputs → Processing → Outputs)

Inputs

- Chat messages arriving at the Chat Trigger webhook URL.

- Existing chat session information (session ID) for context retrieval.

Processing Steps

- Session Loading: The Chat Trigger node loads the chat session using options to track user history.

- Context Storage: The Simple Memory node saves the last 20 messages for each session key from the trigger.

- AI Response: The OpenAI Assistant node uses the current user message and the stored context to generate a chat reply.

- Advanced Calculations: The Calculator node runs math or complex reasoning if AI identifies the need, feeding results into the AI response process.

- Return Answer: The final AI-produced chat reply is sent back through the webhook to the user.

Outputs

- Context-aware and relevant AI chat answers reflecting ongoing conversation.

- Calculated math or logic results included in replies as needed.

Beginner step-by-step: How to use this workflow in n8n

Step 1: Import the Workflow

- Download the workflow file using the Download button on this page.

- Open the n8n editor and choose Import from File.

- Select the downloaded workflow file to add it into n8n.

Step 2: Configure Credentials and Settings

- Add your OpenAI API Key in n8n credentials settings.

- Check the Chat Trigger node’s webhook for correct public access and session options.

- Update any assistantId or session keys if different than default.

- Modify any IDs, emails, channels, or folders if used within nodes.

Step 3: Test the Workflow

- Send a sample chat message to the webhook URL shown in the Chat Trigger node.

- Check if the AI responds correctly and conversation history is kept.

Step 4: Activate for Production

- Once testing works, toggle the workflow status to Active.

- Use the webhook URL in your chat client or integration to start real user chats.

Using self-host n8n is recommended for full control and security.

Common mistakes and how to avoid them

- Not enabling Load Previous Session in Chat Trigger causes chat context loss.

- Incorrect session key expressions break memory storage or mismatch conversations.

- Missing or wrong OpenAI API credentials cause AI authentication failures.

- Not connecting Calculator output properly to AI tool input loses calculation features.

Customization ideas

- Change the number of recent messages kept by adjusting Context Window Length in Simple Memory.

- Switch AI models or assistants by updating assistantId in the OpenAI Assistant node.

- Add more tools like search or database integrations from LangChain nodes for richer chat features.

Pre-production checklist

- Confirm OpenAI API keys are valid and connected in n8n.

- Test sending chat message samples to webhook to check trigger firing.

- Verify Simple Memory stores session-based chat contexts correctly.

- Check that Calculator node outputs integrate with AI reply logic.

- Save backup copies of the workflow JSON before deployment.

Summary and final results

✓ This workflow handles chat messages while remembering past talks.

✓ Users get AI answers with math or logic done inside the chat.

✓ Manual tracking of sessions and calculations is gone.

✓ Teams save hours and reduce errors in AI conversations.

→ Produces faster, smarter AI chats that keep context and handle calculations automatically.