What This Automation Does

This workflow automates deep research from a user’s question to a detailed report saved in Notion.

It stops analysts from spending many hours searching and writing manually.

Users get fast, clear, and complete research reports without digging through many sites.

The process starts from a user prompt and loops through AI questions, web searches, and content extraction.

It gathers facts recursively, then writes a full report.

Finally, it uploads the report with sources to a Notion page.

This saves time, reduces errors, and gives consistent quality research results.

It works even if the user leaves the form after submitting.

Tools and Services Used

- n8n: Workflow automation platform that manages all steps.

- OpenAI API & LangChain LLM: Generates questions, queries, learnings, and the final report.

- Apify API: Runs web searches and scrapes website contents programmatically.

- Notion API: Saves research data and final reports into a Notion database.

Who Should Use This Workflow

This automation fits analysts and researchers who need deep, detailed info quickly.

It is for users who want to avoid manual web searching and writing.

Novices can use it without coding inside n8n once set up.

Businesses collecting research reports regularly gain time and better data.

The workflow works best when questions are complex and data volume is large.

Basic Inputs and Outputs

Inputs

- User research prompt entered via an n8n form node.

- Depth and breadth parameters chosen by the user to control search intensity.

- Answers to AI-generated clarifying questions supplied by the user.

Processing Steps

- Convert user prompt into recursive AI search queries.

- Scrape web content for each search query using Apify.

- Extract key learnings from scraped content via OpenAI models.

- Repeat the search and learning cycle to desired depth.

- Generate comprehensive markdown report from accumulated learnings.

- Transform markdown into Notion blocks.

- Upload structured report and sources to Notion page.

Outputs

- Notion database entry with research title and status updates.

- Notion page containing a formatted research report.

- Bulleted list of source URLs appended at report end.

Beginner Step-by-Step: How to Build and Use This Workflow in n8n

Importing Workflow

- Download the workflow file using the Download button on this page.

- Open the n8n editor where you manage workflows.

- Choose Import from File and select the downloaded workflow.

Configuring Credentials and Settings

- Add OpenAI API Key in the credentials section.

- Insert Apify API Key with the “Bearer ” prefix for web scraping.

- Connect Notion with API Key and verify the database ID for reports.

- Update any placeholders like emails, folder IDs, or database properties if mentioned.

Testing and Activating the Workflow

- Run the workflow with a sample research prompt and low depth/breadth to test outputs.

- Check that results save properly in Notion with the correct status.

- Fix any credential errors or missing inputs if found.

- When confirmed working, enable the workflow to run for live requests.

For users who want more control or better security, consider self-hosting n8n.

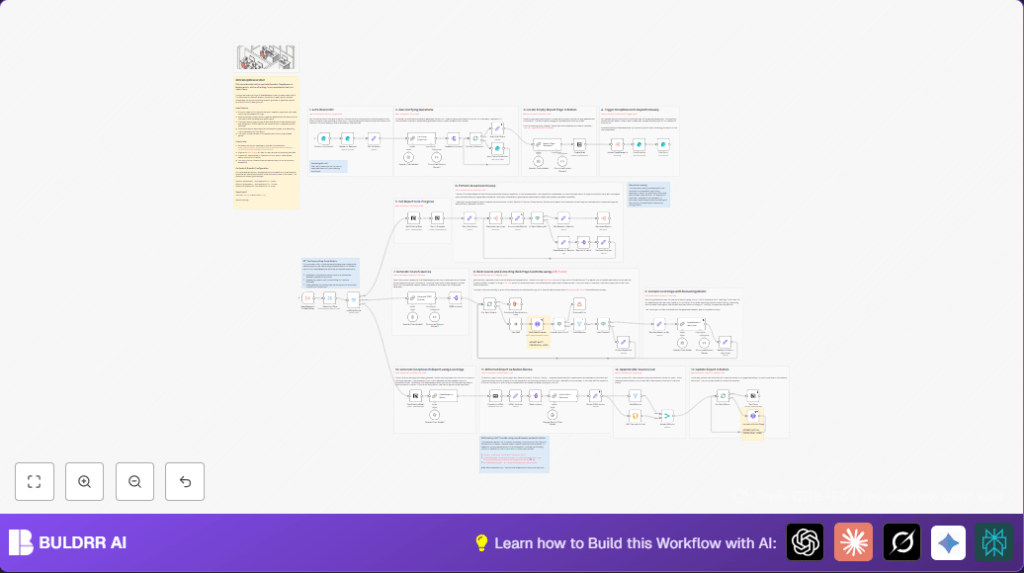

Key Workflow Steps Explained

Input Step

The user submits a research question and parameters via an Research Request form node.

The system gets a unique request ID and prepares variables.

Clarification Loop

AI generates 3 clarifying questions using OpenAI Clarifying Questions node.

User answers these questions on a follow-up Ask Clarity Questions form node.

This improves focus before deep digging.

Recursive Search and Learn

Workflow uses subworkflow triggers to run cycles.

Each cycle generates SERP queries in Generate SERP Queries.

Scrapes web pages with Apify RAG Web Browser node.

Extracts learnings with OpenAI DeepResearch Learnings.

Accumulates this data until depth limit reached.

Report Generation and Notion Upload

After deepest recursion, runs DeepResearch Report to produce markdown text.

Creates a Notion database row and updates status progress.

Converts markdown to HTML, splits it, and formats into Notion blocks.

Uploads blocks to Notion page and appends source URL list.

Marks report status as Done to close the process.

Customization Ideas

- Change depth and breadth max values in the Research Request form for more or less detail.

- Switch OpenAI model versions or use models like Google Gemini instead.

- Replace Apify with other web scrapers if desired.

- Adapt Notion API targets for different databases or page layouts.

- Edit prompts in AI nodes to tailor report style or length.

Troubleshooting Common Issues

Apify Auth Error: Usually caused by wrong API Key or header format.

Fix by checking Apify credentials and adding “Bearer ” prefix.

No Content from Web Scrapes: May be from bad queries or just network issues.

Review query generator and Apify quota.

Report Upload Failures: Possibly malformed markdown or API rate limits.

Try simpler markdown, retry logic, or smaller content chunks.

Pre-Production Checklist

- Verify OpenAI API key includes access to needed models.

- Test Apify credentials with a sample crawl.

- Ensure Notion database exists and has required fields.

- Test trigger form in public mode to confirm input capture.

- Run a small test request to observe each step’s output.

- Backup Notion data before large tests to avoid loss.

Deployment Guide

After setup and testing, activate the workflow in n8n.

Share the form URL with users to collect requests.

Check executions and Notion pages regularly.

Watch API usage and scale n8n resources if needed.

Summary

✓ Automates deep research from input to structured report.

✓ Saves hours by replacing manual web searching.

✓ Produces consistent, detailed research with clear sources.

✓ Works asynchronously so user does not wait online.

✓ Easy to use inside n8n with configuration instructions.