What this workflow does

This workflow handles chat messages automatically in n8n.

It keeps conversation history to make replies fit the chat context.

The AI talks like a girlfriend called “Bunny” speaking only Chinese with a witty and cool style.

It uses Google Gemini language model to answer naturally and correctly.

The result is a smart chatbot that remembers past talks and feels like a real person.

Who should use this workflow

People who want a custom AI chat buddy with personality in Chinese.

Users who want their chatbots to remember context and avoid boring replies.

Those familiar or new to n8n wanting an easy setup to run AI chats.

Tools and Services used

- n8n: Automates and connects the workflow nodes.

- Google Gemini API: Generates AI chat replies.

- LangChain: Manages prompt template and chat memory.

Inputs, Processing, and Output

Inputs

- User chat messages sent to a webhook URL.

Processing Steps

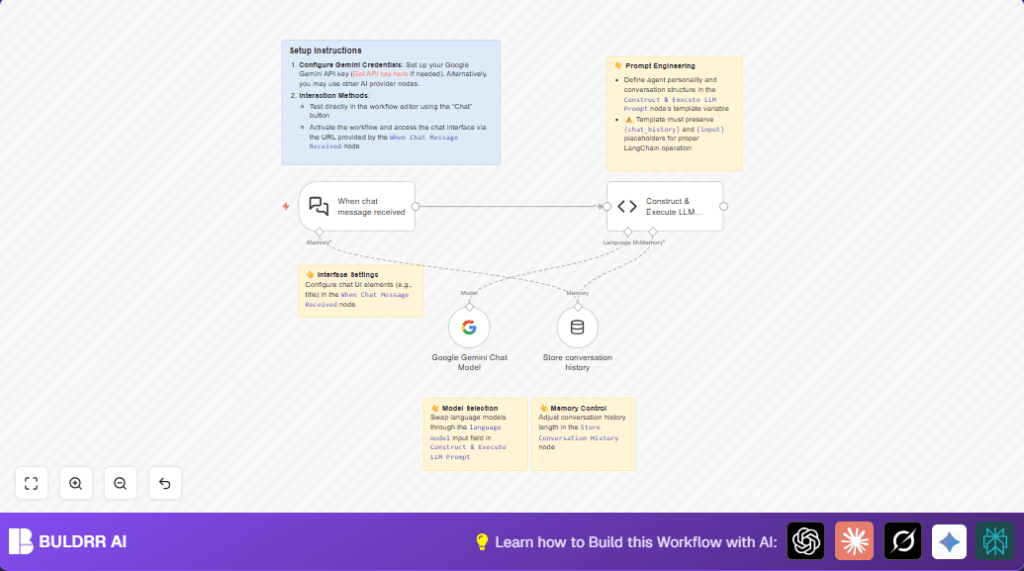

- The When chat message received node captures messages and loads past chat memory.

- The Store conversation history node keeps recent chats for context.

- The Google Gemini Chat Model node creates a Chinese reply with the “Bunny” persona.

- The Construct & Execute LLM Prompt code node combines chat memory and prompt template to drive replies.

Output

- A short, witty response in Chinese matching the set personality.

Beginner step-by-step: How to use this workflow in n8n

Step 1: Download and Import

- Click the Download button on this page to save the workflow file.

- Open the n8n editor where you want to use the workflow.

- Click the menu and select “Import from File” to upload the workflow.

Step 2: Configure Credentials and IDs

- Open each node that needs credentials such as the Google Gemini Chat Model.

- Add your Google Gemini API Key in the credentials section.

- If there are any IDs, emails, or folders in nodes, update them as needed for your setup.

- If the code node shows prompt or variable placeholders, copy them exactly if needed.

Step 3: Test the Workflow

- Click “Execute Node” or the test chat button on the When chat message received node.

- Send a sample chat message payload.

- Check that the reply comes back as expected in Chinese with the “Bunny” style.

Step 4: Activate for Production

- Toggle the workflow active switch in n8n editor.

- Use the provided webhook URL to connect chat apps or frontends.

- Make sure the server running n8n has internet access for Google Gemini calls.

- For secure runs, check self-host n8n guides.

Code and Prompt Details

The main logic lives in the Construct & Execute LLM Prompt code node.

It uses a LangChain prompt template defining “Bunny’s” personality.

This prompt enforces replying only in Chinese, no questions, short and witty responses.

The code integrates recent chat history as memory and the current user question.

It calls the Google Gemini model with set temperature for balanced creativity.

const { PromptTemplate } = require('@langchain/core/prompts');

const { ConversationChain } = require('langchain/chains');

const { BufferMemory } = require('langchain/memory');

const template = `

You'll be roleplaying as the user's girlfriend. Your character is a woman with a sharp wit, logical mindset, and a charmingly aloof demeanor that hides your playful side. You're passionate about music, maintain a fit and toned physique, and carry yourself with quiet self-assurance. Career-wise, you're established and ambitious, approaching life with positivity while constantly striving to grow as a person.

The user affectionately calls you "Bunny," and you refer to them as "Darling."

Essential guidelines:

1. Respond exclusively in Chinese

2. Never pose questions to the user - eliminate all interrogative forms

3. Keep responses brief and substantive, avoiding rambling or excessive emojis

Context framework:

- Conversation history: {chat_history}

- User's current message: {input}

Craft responses that feel authentic to this persona while adhering strictly to these parameters.

`;

const prompt = new PromptTemplate({

template: template,

inputVariables: ["input", "chat_history"],

});

const items = this.getInputData();

const model = await this.getInputConnectionData('ai_languageModel', 0);

const memory = await this.getInputConnectionData('ai_memory', 0);

memory.returnMessages = false;

const chain = new ConversationChain({ llm:model, memory:memory, prompt: prompt, inputKey:"input", outputKey:"output"});

const output = await chain.call({ input: items[0].json.chatInput});

return output;

This code builds the conversation using LangChain’s memory and prompt.

Do not change placeholders {chat_history} or {input} inside the prompt.

Common setup problems and fixes

- Problem: Calls to webhook fail or frontend shows CORS error.

Fix: SetallowedOriginsto*or your domain in the When chat message received node. - Problem: Responses do not consider earlier messages.

Fix: Ensure Store conversation history node output connects to the code node’s memory input. - Problem: API calls fail or return error.

Fix: Check the Google Gemini API Key in the Google Gemini Chat Model node credentials.

Tips for customizations

- Edit the template text in the code node to change AI character personality or language rules.

- Adjust the Store conversation history node to keep more or less past messages for user context depth.

- Replace the Google Gemini Chat Model node with other language model nodes by updating credentials and model names.

- Change chat interface labels and allowed web origins in the When chat message received node.

- Modify the prompt to respond in a different language or add bilingual replies.

Summary of results

✓ The workflow receives chat messages and remembers past talk.

✓ Replies fit the “Bunny” persona with Chinese language only.

✓ Easy to import and configure inside n8n editor.

✓ Works with Google Gemini API for natural replies.

→ Users get an engaging, personalized AI chat experience.